CAPTCHAFORUM

Administrator

Data extraction has many forms and can be complicated. From Preventing your IP from getting banned to bypassing the captchas, to parsing the source correctly, headless chrome for javascript rendering, data cleaning, and then generating the data in a usable format, there is a lot of effort that goes in. By the way, if you wonder whether search engines can parse and understand the content rendered by Javascript, check out this article

Clients of 2captcha service are faced with wide variety of tasks, from parsing data from small sites to collecting large amounts of information from large resources or search engines. To automate and simplify this work, there are a large number of services integrated with 2captcha, but it is not so easy to understand this variety and pick optimal solution for specific task.

With the help of our customers we have studied popular services for data parsing and compiled for you the top 10 most convenient and flexible of them. Since this list includes wide range of solutions from open source projects to hosted SAAS solutions to desktop software, there is sure to be something for everyone looking to make use of web data!

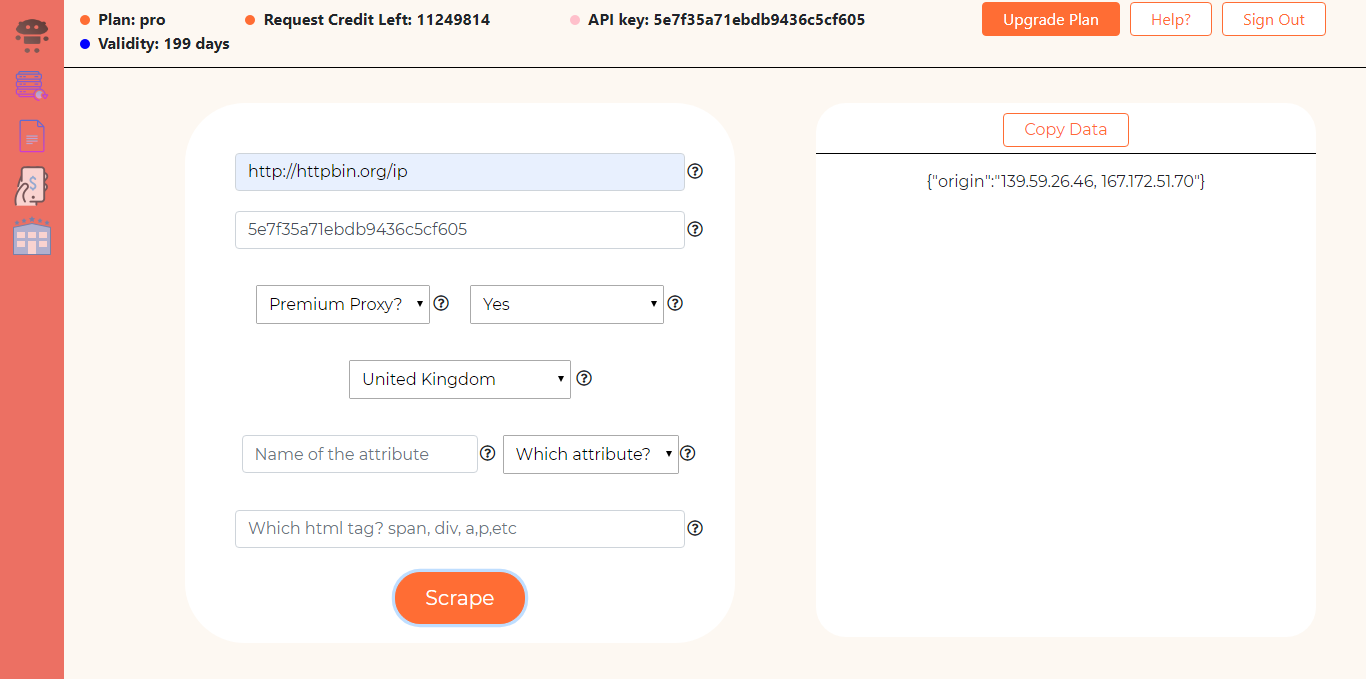

Scrapingdog

It is a very high-end web scraping tool that provides millions of proxies for scraping. It offers data scraping services with capabilities like rendering JavaScript & bypassing captchas. Scrapingdog offers two kinds of solutions:

- Software is built for users with less technical knowledge. As you can see in the above image that you can manually adjust almost anything from rendering JavaScript to handling premium proxies. This software also provides structured data in JSON format if you specify particular tags & attributes of the data you are trying to scrape.

- API is built for developers. You will be able to scrape websites by just mentioning queries inside the API URI. You can read it’s documentation here.

MyDataProvider is a brilliant solution for e-commerce companies to manage their information. It's specifically designed for e-commerce data extraction:

- categories and hierarhy

- products prices, quantities

- sales price, whosales price, discount price

- features

- options

- variants with related images, prices, quntities

And also proposes convenient options for export collected data in csv, excel, json and xml format, as well as direct export to online store like shopify, woocommerce, prestashop etc.

Service provides an ability to apply margin rules for prices, which makes it universal and complete solution for e-commerce sector.

As a pros there are also ability to scrape data behind a login page, to calculate the delivery of goods from one country to another, to collect data from various locations, to scrape non-English websites etc.

Octoparse

Octoparse is the tool for those who either hate coding or have no idea of it. It features a point and clicks screen scraper, allowing users to scrape behind login forms, fills in forms, input search terms, scrolls through the infinite scroll, renders javascript, and more. It provides a FREE pack with which you can build up to 10 crawlers.

Parsehub

The nice thing about ParseHub is that it works on multiple platforms including mac however the software is not as robust as the others with a tricky user interface that could be better streamlined. Well, I must say it is dead simple to use and exports JSON or excel sheet of the data you are interested in by just clicking on it. It offers a free pack where you can scrape 200 pages in just 40 minutes.

Diffbot.com

Diffbot has been transitioning away from a traditional web scraping tool to selling prefinished lists also known as their knowledge graph. There are pricing is competitive and their support team is very helpful, but oftentimes the data output is a bit convoluted. I must say that Diffbot is the most different type of scraping tool. Even if the HTML code of the page changes this tool will not stop impressing you. It is just a bit pricy.

Import.io

They grew very quickly with a free version and a promise that the software would always be free. Today they no longer offer a free version and that caused their popularity to wain. Looking at the reviews at capterra.com they have the lowest reviews in the data extraction category for this top 10 list. Most of the complaints are about support and service. They are starting to move from a pure web scraping platform into a scraping and data wrangling operation. They might be making a last-ditch move to survive.

Scrapinghub

Scrapinghub claims that they transform websites into usable data with industry-leading technology. Their solutions are “Data on Demand“ for big and small scraping projects with precise and reliable data feeds at very fast rates. They offer lead data extraction and have a team of web scraping engineers. They also offer IP Proxy management scrape data quickly.

Mozenda.com

Mozenda offers two different kinds of web scrapers. Downloadable software that allows you to build agents and runs on the cloud, and A managed solution where they make the agents for you. They do not offer a free version of the software.

Webharvy

WebHarvy is an interesting company they showed up a highly used scraping tool, but the site looks like a throwback to 2009. This scraping tool is quite cheap and should be considered if you are working on some small projects. Using this tool you can handle logins, signup & even form submissions. You can crawl multiple pages within minutes.

80legs

80legs has been around for many years. They have a stable platform and a very fast crawler. The parsing is not the strongest, but if you need a lot of simple queries fast 80legs can deliver. You should be warned that 80legs have been used for DDOS attacks and while the crawler is robust it has taken down many sites in the past. You can even customize the web crawlers to make it suitable for your scrapers. You can customize what data gets scraped and which links are followed from each URL crawled. Enter one or more (up to several thousand) URLs you want to crawl. These are the URLs where the web crawl will start. Links from these URLs will be followed automatically, depending on the settings of your web crawl. 80legs will post results as the web crawl runs. Once the crawl has finished, all of the results will be available, and you can download them to your computer or local environment.